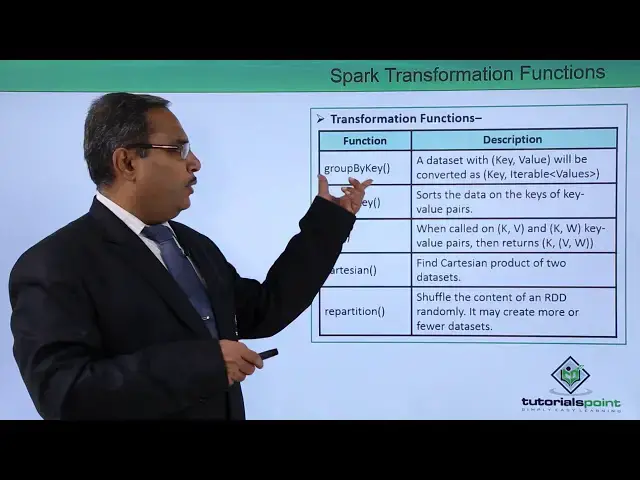

Spark Transformation Functions

Show More Show Less View Video Transcript

0:00

in this video we are discussing spark

0:02

transformation functions so there are so

0:05

many functions are there and you'll be

0:07

concentrating only those functions which

0:09

are very much regularly used and in our

0:12

spark related applications so here we

0:16

are having one table the first column is

0:18

containing the function name and the

0:20

sinem column is containing the purpose

0:22

and description of the function so first

0:24

function is map so returns new or DT

0:27

formed by passing elements from the

0:30

source through the map so in the sub map

0:33

the user can put their respective

0:35

business logic and here it will take the

0:38

same partitions same number of

0:40

partitions as as input and same number

0:42

of partitions will be produced as output

0:44

so that is a main concept are in this

0:47

map and like all transformation

0:50

functions it will take our duty as input

0:53

and produces our DD as output next one

0:56

is the filter returns new or DD by

0:59

selecting those element who where filter

1:02

returns true so filtered will have some

1:04

criteria so depending upon the criteria

1:07

those records will be selected from the

1:10

input to the output which are matching

1:12

with the criteria next one is a flat map

1:16

so here each input item can be mapped to

1:19

0 or more output items so here in case

1:23

of flat map it will have say in number

1:26

of partitions as input and it can

1:28

produce 0 1 or more than 1 partitions as

1:32

output so that is a basic concept of

1:34

this flat map method and here each input

1:38

item can be mapped to 0 or more than

1:41

output items and more output items and

1:44

that is known as the flat map method we

1:47

are having a separate video on this map

1:49

and flatmap method you can also watch

1:51

that one next one is the map partitions

1:54

this particular method so same as map

1:57

but runs map tasks for each partitions

2:00

of an RDD next one is Union so returns

2:05

the new oddity of the Performing Union

2:08

operation on the data so it will return

2:10

that separate another ID or Duty there

2:13

is a

2:13

or did he and which is performing the

2:16

union of our data next we are going for

2:20

group by key method so this particular

2:23

function is that a data set with key

2:26

value pair will be converted to key and

2:28

then iterable values because it is a

2:31

group by key so he will be there for the

2:34

same key that it a table on the values

2:37

will be obtained in case of group by key

2:40

method next one is the shot by key

2:43

function and shorts the data on the key

2:45

of key value pairs so it will shot it

2:49

depending upon the key value pairs so

2:51

depending upon the key in the key value

2:53

pairs next one is the joint function and

2:56

when called on kV and kW the key value

3:01

pairs

3:01

it returns K V Kamata blue and that is

3:05

known as the joint operation next one is

3:08

the Kardashian so fine Cartesian product

3:11

of two data sets that means all possible

3:14

combinations of two data sets next one

3:16

is the repartition and suffle the

3:19

content of an RDD randomly and it may

3:22

create more fewer more or fewer data

3:26

sets and that is known as the real

3:28

partition so these are the different

3:30

functions which are available in our

3:32

transformation on our didi thanks for

3:35

watching this video

#Programming