Data Backup & Backup Options

Show More Show Less View Video Transcript

0:00

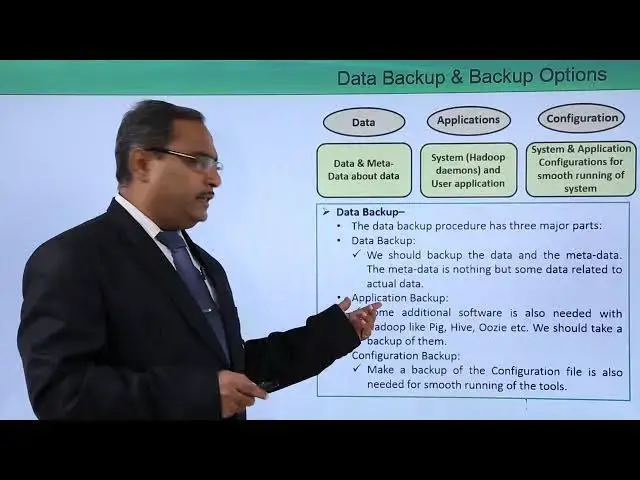

In this video we are discussing data backup and backup options

0:05

Whenever Hadoop infrastructure or Hadoop hardware will fail, then we may have to suffer

0:10

from data loss, software loss and the configuration loss. So that's why we should take backup of all these three

0:20

That is we require data backup, software backup and configuration backup for the smooth running

0:26

of the system when it will be restored. So, let us go for some more discussion on this topic

0:34

So, at first we require the data backup, that is the data and metadata about data, and

0:39

then application, there is a system, Hadoop demands and user applications, and then we

0:45

require the configuration backup also, and this configuration is actually holding the system

0:50

and application configurations for smooth running of the system. So the data backup procedure has three

0:58

major parts. The first one we're going for the data backup. So we should backup the data and

1:05

the metadata. The metadata is nothing but some data related to the actual data. So here in this

1:12

metadata will be having multiple different node related information, replica related information

1:18

and so on. So that's why we should take the backup of the data as well as the metadata

1:24

Next we're having this application backup. Some additional software is. is also needed with Hadoop like our PIG HIP OZ so these softers we usually download and install on our Hadoop system So we should take a backup of them also

1:42

Otherwise, those softers will be lost. Next one, we are going for the configuration backup

1:48

So make a backup of the configuration file is also needed for smooth running of the respective tools

1:55

So this configuration backup is also required along with the data and application

2:01

How to backup data. So, regarding this aspect, let us discuss. We need to decide which data are critical and which are less important

2:12

Obviously, the important and critical data must be taken in backup at first

2:17

So, taking a backup of an extremely huge amount of data is also very difficult and time

2:24

consuming and it will require a huge space. The distributed copy method is also a very difficult

2:31

used to overcome these problems and this tool is also known as D-I-T-C-P, that is a distributed

2:39

copy and it can store one Hadoop cluster data to another Hadoop cluster

2:45

So that is a method is known as distributed copy and the respective command will be D-I-T-C-P

2:53

FRIM is another way to copy data in parallel fashion from one cluster to another cluster

3:00

So, in this way, we can take the backup of our data and which data are to be backup, that

3:05

is the data with the metadata, we're having the application and the configuration

3:11

Thanks for watching this video

#Data Management

#Programming

#Cloud Storage